Full Disclosure Nikkas

And the countdown begins to our first hands-on coverage.

www.digitalfoundry.net

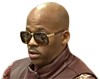

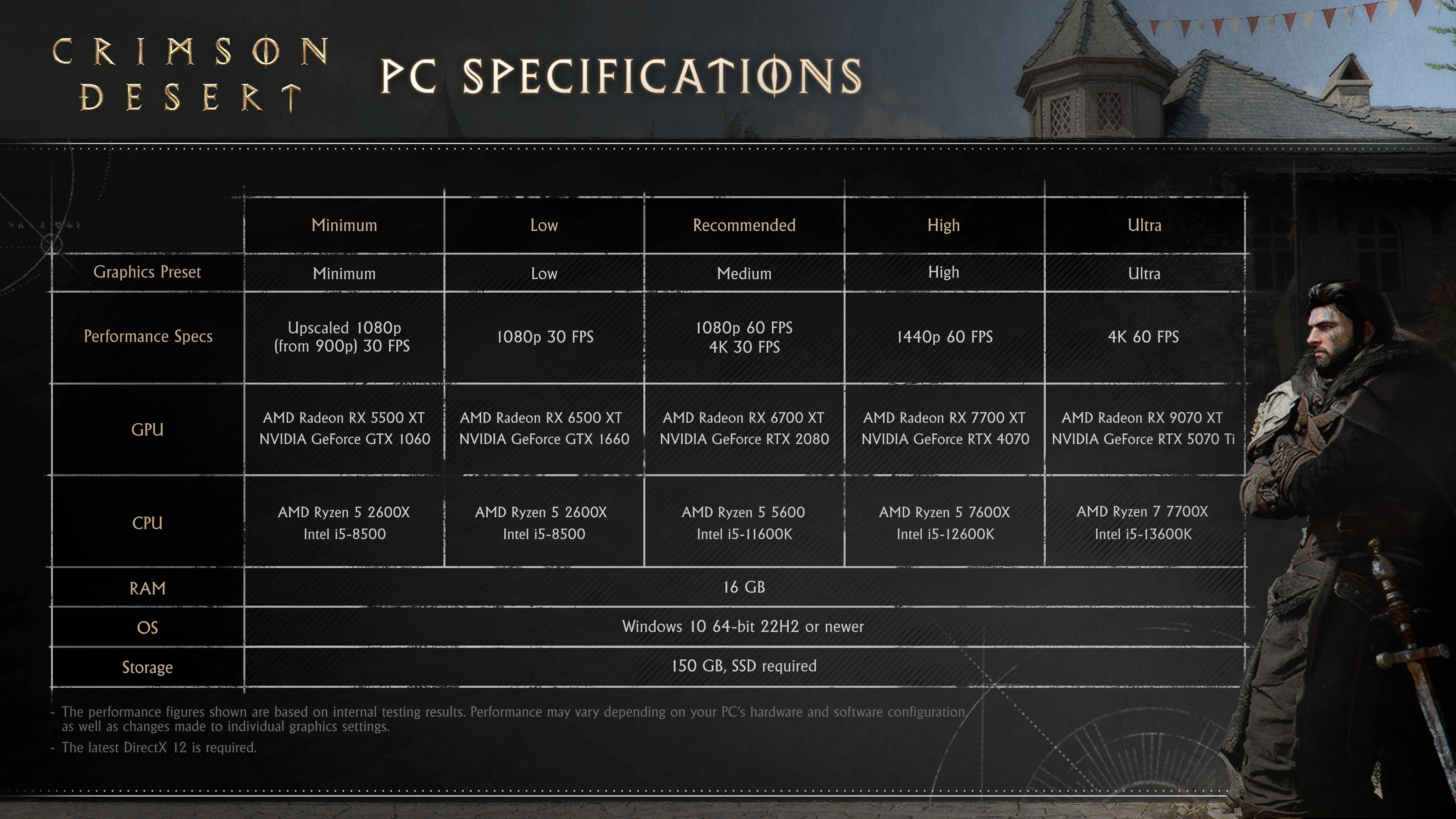

Crimson Desert is shaping up to be by far the most eagerly anticipated game so far in 2026 - and based on everything we've looked at so far, the hype is real. Not only that, but the

exhaustive recommended specifications delivered by Pearl Abyss are also quite special. This is a developer projecting an air of confidence about its game, to the point where we're looking at full disclosure not just on PC, but on consoles and even PC handhelds too.

Internally here at Digital Foundry, we're busy working on our coverage and that'll begin with a preliminary (but still very detailed!) look at the PlayStation 5 Pro version of the game before we move onto a wider range of topics: we'll be looking at the highest-end PC experience, we'll be delivering our own optimised PC settings along with console equivalents (effectively Pearl Abyss' own choices for more mainstream hardware) and - of course - we'll be looking to provide you with a complete platform comparison once we have access to all versions of the game.

Expect our first look at consoles very soon, but in looking into Crimson Desert, we had a bunch of questions we put to Pearl Abyss - and that starts with the core technology, dubbed the BlackSpace Engine. The "black" part comes from the developer's prior game, Black Desert Online. Space? It refers to a more general "universe".

But that just sets the stage for the interview to come.

Digital Foundry: The game has a captivating day night cycle and indirect lighting that appears to be achieved by ray tracing. How is diffuse global illumination achieved in the game for the sun and local lights?

Pearl Abyss: We wanted to simulate the natural phenomenon of light as closely as possible. First, we calculate luminance of the sun and moon in space from the defined intensities. Then we calculate atmospheric scattering of the sun and moon lights in the atmosphere of Pywel. The survived photons become sources of directional light, and the scattered photons become the sources of sky light. We generate Surfels for all meshes around the camera and update their radiance offscreen. When calculating irradiance for a surface, we generate rays and trace hit information for them. When a ray hits a surface, the incident irradiance can be obtained from the Surfel-based Radiance Caches and the calculated directional lighting using shadow maps. When a ray does not hit anything, the incident irradiance can be obtained from the sky lighting in cubemap form.

The reason why we do not include the sun and moon lights into the Surfel-based Radiance Caches is to eliminate indirect lighting delays for the directional lights. For local lights, we build a tree structure of light hierarchy and sample the lights from it when we are calculating local lighting, which we call Many Lights Sampling. There is no theoretical limit to the number of lights, but to reduce sampling cost and memory usage, we limit the number of lights in the tree to 32,767. The visibility of each sampled light is tested using ray tracing, and then we can calculate lighting for the light.

Since the sampling and raytracing budgets are limited, the naive lighting results are quite noisy. To reduce noise, we reuse the sampling results of spatial neighbors for better sampling distribution and apply simple denoising for the diffuse lighting results. The specular lighting results are merged with the specular GI results to avoid separate denoising costs to maintain lighting consistency. Many Lights are also sampled into the Surfel-based Radiance Caches to provide indirect lighting, but we exclude high-frequency lights to avoid ghosting.

Digital Foundry: Do you use an RT probe approach or per-pixel? Combined with short screen-space rays? How many bounces? Is there a cache? The more nitty gritty details, the better!

Pearl Abyss: Basically we shoot GI rays per-pixel. However, the rays-per-pixel ratio varies depending on quality settings. Diffuse GI and Many Lights use 1/16~1 rays per pixel and Specular GI uses 1/4~1 rays per pixel. After shooting and evaluating radiance for diffuse GI rays, we build Tiled Radiance Caches to resolve the noise from insufficient rays, which are screen-based tile representations over 32x32 pixels. Then the Tiled Radiance Caches are denoised and the Clustered Radiance Caches are also built from Tiled Radiance Caches to handle many depth layers on screen.

Although the ray generation itself only uses single indirect bounce rays, it behaves like a pseudo-multiple bounce because the radiance cache updates form a feedback loop. When sampling the Tiled Radiance Caches from at a pixel, screen-space rays are used to estimate the visibility of the tile. We also use SSAO to preserve pixel-level occlusion details. When sampling radiance for a hit ray, the Surfel-based Radiance Caches described above are used. There are other attempts such as Ray Guiding and ReSTIR GI to compensate for noise caused by insufficient number of ray samples.

Digital Foundry: What objects, materials and textures are included into the games bounding volume hierarchy and how far does it go into the distance?

Pearl Abyss: Almost all objects except grass and very small objects, including terrain, buildings, props, trees and characters are included in the ray tracing acceleration BVH. However, due to limitations in memory budget and raytracing performance, the distance of raytracing BVH is limited to 100-150m. The objects at distances above the BVH are represented using SDFs up to about 2km. Since the Hit Shader divergence is critical to ray tracing performance, we use simplified representations of original materials. For complex multi-texturing on characters, we bake them into a single texture for each character. The emissive materials are included in the raytracing BVH to represent indirect reflections.

Digital Foundry: How is indirect specular lighting on water bodies, armour and more achieved?

Pearl Abyss: Indirect specular lighting is also calculated using ray tracing. For the systems that do not support ray tracing, SDF ray marching is used instead. We also utilise the SDF ray marching for distant reflections that exceed ray tracing BVH limits. Screen-Space Rays are also used to save on ray tracing budget and achieve accurate reflections. For rough surfaces we fallback to the Tiled Radiance Caches to reduce noise and save on ray tracing budget. Remaining noise is handled with a simple denoiser.

Digital Foundry: Trees extend very far into the distance, how is the extreme LOD achieved?

Pearl Abyss: We use SpeedTree assets for tree meshes. However, to represent distant trees, we build 3D imposters rendered in 8x8 directions. The tree imposters are also baked into Proxy LODs, the final LOD that represents our world. Since the Proxy LODs have no distance limit on systems above the recommended spec, so tree imposters also have no distance limit.

Digital Foundry: Does the game take advantage of mesh shading at all for LOD/Culling?

Pearl Abyss: The tree imposter explained above is implemented using mesh shaders on available hardware. There are also other techniques that utilize mesh shaders.

Digital Foundry: How is close and distant shadow rendering achieved for players, distant objects/trees, and terrain?

Pearl Abyss: There are several layers of shadow maps to represent vast open world environments. First, static shadow maps consist of two cascades, which are updated progressively to cover most of the distant objects, including trees.

Second, terrain shadow map consists of a single cascade, only containing very far terrain meshes. Third, dynamic shadow maps consist of two cascades, which are updated every frame to represent all nearby objects and animated objects, including trees, cloth and characters.

Finally, near-field shadow maps consist of a single cascade that includes only nearby characters for detailed shadow representation. The micro-details on the surfaces are represented using screen-space contact shadows. We also have a ray tracing-based directional light shadow implementation but it's not shipped for several reasons such as high demand for ray tracing budget and missing animated tree updates. We may consider including ray-traced direction light shadows in a future update.

Digital Foundry: Water Rendering is really unique with more than just standard mesh displacement we usually see, it falls back onto itself too fill in areas now recessed - how is this achieved?

Pearl Abyss: It's a particle simulation that solves the Shallow Water Equation. It simulates up to 250,000 particles near the camera. The particles collide with boundary conditions such as terrain height and shoreline SDFs, and also collide with each other. When particles collide with an obstacle, the pressure between them is increased, causing the upper particles to rise. These particles then fall back down due to gravity.

Digital Foundry: Which upscalers/image reconstruction techniques does the game support on launch?

Pearl Abyss: We support FSR 3/4 and DLSS 4/4.5 for PC, PSSR for PS5 Pro, and FSR 3 for PS5 base and Xbox Series S/X. Frame generation is only supported on PC, and both FSR and DLSS frame generation are supported.

Digital Foundry: Does the game support Ray Regeneration from AMD or Ray Reconstruction from Nvidia?

Pearl Abyss: Yes, we both support AMD FSR Ray Regeneration and Nvidia DLSS Ray Reconstruction. They each show their own excellent results, and provide sharper indirect shadows compared to our in-house denoiser.

this too good to be true

this too good to be true

thinking I'ma be all in with this game for 3-4 weeks or some shyt.

thinking I'ma be all in with this game for 3-4 weeks or some shyt.

Why would you say that?

Why would you say that?

PS5 Pro is the winner. I made the right choice to trade my base PS5 for PRO on the launch day.

PS5 Pro is the winner. I made the right choice to trade my base PS5 for PRO on the launch day.