cyndaquil

Lv 100 Bold natured

"Cancel ChatGPT" movement goes big after OpenAI's latest move

After Anthropic refused flat out to agree to apply Claude AI to autonomous weapons and mass surveillance of American citizens, OpenAI jumps right into bed with the United States Department of War.

www.windowscentral.com

www.windowscentral.com

AI summary (Claude):

---

"Cancel ChatGPT" Movement Goes Mainstream

Source: Windows Central — February 28, 2026

The Big Split

Anthropic was designated a supply chain risk and banned from U.S. governmental agencies after refusing a deal with the Department of War. Anthropic drew two firm red lines:

- No use of Claude for autonomous weapons

- No mass surveillance of American citizens

OpenAI's Response

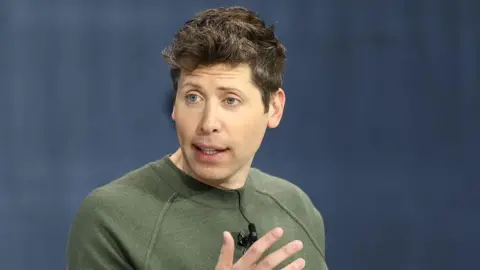

Sam Altman quickly stepped in, pledging ChatGPT and other OpenAI technologies to the U.S. government. While Altman claimed on X that OpenAI's models wouldn't be used for mass surveillance, a U.S. government official immediately contradicted him — saying models would be used for "all lawful means." This matters because mass surveillance is lawful under certain Patriot Act provisions.

Anthropic wanted control over how its technologies would be used. Altman by comparison was happy to let the U.S. government decide.

Public Backlash

The move triggered a viral "Cancel ChatGPT" movement, with Reddit threads garnering thousands of upvotes from users cancelling their subscriptions.

You're now training a war machine. Let's see proof of cancellation.

Time to cancel ChatGPT Plus after three years. Anthropic got nuked for having ethics, and Sam Altman instantly swooped in for the Pentagon bag.

Where the Industry Stands

- Anthropic — Refused the deal. Banned from U.S. agencies as a result.

- OpenAI — Accepted. No hard limits beyond government interpretation of the law.

- Google — Removed its explicit ban on autonomous weapons last year.

- Microsoft — OK with autonomous weapons as long as a human pulls the final trigger.

- Amazon — No real prohibitions beyond vague "responsible use" language.

- Meta — Has actively courted Pentagon military contracts.

Editorial Note

The article is openly critical of Sam Altman and the broader AI industry's willingness to prioritize profit over ethics, while acknowledging Anthropic as a rare — if imperfect — exception.

to Anthropic.

to Anthropic.