You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

ChatGPT Brings Down Online Education Stocks. Chegg Loses 95%. Students Don’t Need It Anymore

- Thread starter bnew

- Start date

More options

Who Replied?IIVI

Superstar

Yup. Plus I just don't think it has a large database of Circuits problems.Also an engineer and musician, two fields that ChatPT struggles with, it spits out a lot of common sense answers, but abstract thought is something it can't come up with which isn't surprising.

It can manage concrete solutions to a degree but any critical thinking request fails..

It's much easier to parse through a bunch of LaTeX questions and resolve the logic from there, but things like Circuits that have a lot of techniques, simplifications, multiple approaches, a lot of pictures, etc. it struggles with bad.

Sometimes with these circuit problems you solve one portion, then you need to redraw the circuit and find the new simplification. It's not doing that and it's leading it to crap out. It also for some reason doesn't recognize the difference between simple configurations.

Math I can see it being ok, it's basically an equation solver like Wolfram is that can use a little more context to get the specific Math problems correct. Signals class because it's straight up Math-heavy and basically a Math class will probably be easy for it.

However, yeah things like Circuits when it's a lot less "rote" than that, and requires some critical thinking, it's struggling and can't solve it's way out of a wet paper bag. I didn't even try to throw in any Op-Amps, so any basic Electronics class I'm sure it's not ready for either.

Right now a glorified Google is the best description for it's current capabilities until it can show me the ability to solve problems like that.

The funny thing is, I actually want it to get better at solving these things so that I can delegate work to it, get correct answers quickly and check my understanding.

Last edited:

This. It sounds cute until life and death situations arise and you can’t ChatGPT your way out of it.

Because it can literally give you answers to circumvent needing to learn. Why bother writing an essay where my grammar is challenged when I can have GPT spit one out in seconds?

When chatgpt first came out it was immediately used en masse for cheating on schools. That's a net negative for learning

from my experience I realized to extract more value from large language models, I needed to expand my vocabulary and learn how to communicate more effectively to get the results I desired. I'm used it to doublecheck my grammar and improve it general. these things can be instructed to critique, it all the depends on how its used.

Art Barr

INVADING SOHH CHAMPION

Who said anything about changes to testing/exams? I doubt anyone would be allowed to use Chatgpt in an exam.

We’ve had google since 1998, how has that restricted the “recall of facts”?

Y'all do not even have text books for kids.

We can break this down to even hiphop culture.

You dudes on here know nuffin about hiphop

S8mply because you think you are gonna Google it. When in hiphop. You are supposed to know all the relevent cultural norms and mores of everypillar without fail. As well as knowledge of self.

Yet none of you anywhere are nice in any pillar of hiphop.

Plus know none of the morals or norms of hiphop.

We can start there.

Just for starters.

What chu write?

you can not google the answer. You are supposed to know.

so answer the question?

Art Barr

Art Barr

INVADING SOHH CHAMPION

I used mathway.com a long time ago. It was ahead of its time really.

When I was getting my masters, folks got caught using ChatGPT to write their papers. Apparently the professors were told their wasn't anything on the guidelines about using AI so they couldn't hold the students accountable Apparently. They had to add to the guidelines the following semester to include rules regarding AI and work. It was crazy that the school wasn't firm with that because at the end of the day the students didn't write shyt clear case of plagiarism but since it was technically original content from ChatGPT they didn't consider it that. I would have never even thought about doing that at a graduate level and risk getting kicked out.

This.

Art Barr

This is what happens when greed becomes more important than anythingNext generation gonna be dumb AF

And people that don't value education are really gonna feel it down the line

Learning how to think>>>>>>>>someone or something telling you what to do

A lot of these higher education degrees and shyt gonna start looking really funny in the light. But these cacs Gon say it's all merit.I used mathway.com a long time ago. It was ahead of its time really.

When I was getting my masters, folks got caught using ChatGPT to write their papers. Apparently the professors were told their wasn't anything on the guidelines about using AI so they couldn't hold the students accountable Apparently. They had to add to the guidelines the following semester to include rules regarding AI and work. It was crazy that the school wasn't firm with that because at the end of the day the students didn't write shyt clear case of plagiarism but since it was technically original content from ChatGPT they didn't consider it that. I would have never even thought about doing that at a graduate level and risk getting kicked out.

IsThatBrothaMouzone?

Veteran

Yeah but that's after 12 years of school and a minimum of 4 years in college, some with 6 years and an M.S.This has and always will be the case. I’m sure your job has the same people, few who really know how the problem solve. The bulk just know how to press buttons, and the others who are just there for a paycheck.

I'm late 30s and probably represent the median age of everyone in my department. We know how to cut corners but we also know how to do things the "hard way" with a pen, paper and calculator.

Problem is these kids only know the automated way, so they won't know how to check if something is wrong. In fact they won't know what "right-ish" and "wrong-ish" look like because in many cases there is no concrete 'correct' answer. There's levels of optimization.

Example - we currently switched to a web-based platform and there are A LOT of issues with calculations, contract language etc. We make it work because we know what we're doing and we know how to explain to IT what's wrong. Without the necessary knowledge, we'd just assume everything is OK and it would cost millions each quarter just off mistakes.

Who benefits from a whole generation of young adults not knowing how to problem solve? Likely someone that doesn't have their best interests at heart.

Yeah but that's after 12 years of school and a minimum of 4 years in college, some with 6 years and an M.S.

I'm late 30s and probably represent the median age of everyone in my department. We know how to cut corners but we also know how to do things the "hard way" with a pen, paper and calculator.

Problem is these kids only know the automated way, so they won't know how to check if something is wrong.

Example - we currently switched to a web-based platform and there are A LOT of issues with calculations, contract language etc. We make it work because we know what we're doing and we know how to explain to IT what's wrong. Without the necessary knowledge, we'd just assume everything is OK and it would cost millions each quarter just off mistakes.

Who benefits from a whole generation of young adults not knowing how to problem solve? Likely someone that doesn't have their best interests at heart.

The bolded is 100% incorrect. The technology is typically off by some degree and people who are experienced in this technology are aware of this and are developing the skill set of being well versed enough in their domain to rapidly be able to check and correct small errors or completely modify models into something that is closer to the truth.

Not only are they doing that, but they're training their AI profiles to be more accurate in the process.

Y'all are about to have your entire feelings hurt by the kids who know how to use this technology and will eventually be 20x's more effective. What's going to happen is that the kids will know the important things you actually need to know and all the useless fluff will be cut out and things only people who have a passion for that particular piece of work will bother to learn.

IIVI

Superstar

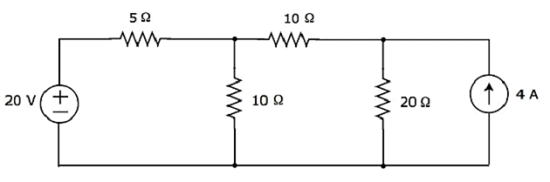

I got another good circuits task to ask of it.

Ask it to write the CircuitJS code for it. Copy the output it gives.

Then go to https://www.falstad.com/circuit/circuitjs.html

File > Import From Text and see how close it is

A lot of them don't even run!

I can't even use that thing to save time on basic Circuits stuff.

Chegg experts would laugh at this stuff.

Edit:

Bonus ask it to find the Current through the 20 Ohm resistor.

The correct answer is 2 Amps.

Do the same 30 minutes later. Does it even give the same wrong answer later or another completely different wrong answer?

My barometer is when it can start to solve stuff like this correctly, then I'll start taking note and being impressed.

Ask it to write the CircuitJS code for it. Copy the output it gives.

Then go to https://www.falstad.com/circuit/circuitjs.html

File > Import From Text and see how close it is

A lot of them don't even run!

I can't even use that thing to save time on basic Circuits stuff.

Chegg experts would laugh at this stuff.

Edit:

Bonus ask it to find the Current through the 20 Ohm resistor.

The correct answer is 2 Amps.

Do the same 30 minutes later. Does it even give the same wrong answer later or another completely different wrong answer?

My barometer is when it can start to solve stuff like this correctly, then I'll start taking note and being impressed.

Last edited:

Sauce Mane

Superstar

Ethnic Vagina Finder

The Great Paper Chaser

All chat-gtp is

An advanced google search.

It’s not real AI.

An advanced google search.

It’s not real AI.

Yup. Plus I just don't think it has a large database of Circuits problems.

It's much easier to parse through a bunch of LaTeX questions and resolve the logic from there, but things like Circuits that have a lot of techniques, simplifications, multiple approaches, a lot of pictures, etc. it struggles with bad.

Sometimes with these circuit problems you solve one portion, then you need to redraw the circuit and find the new simplification. It's not doing that and it's leading it to crap out. It also for some reason doesn't recognize the difference between simple configurations.

Math I can see it being ok, it's basically an equation solver like Wolfram is that can use a little more context to get the specific Math problems correct. Signals class because it's straight up Math-heavy and basically a Math class will probably be easy for it.

However, yeah things like Circuits when it's a lot less "rote" than that, and requires some critical thinking, it's struggling and can't solve it's way out of a wet paper bag. I didn't even try to throw in any Op-Amps, so any basic Electronics class I'm sure it's not ready for either.

Right now a glorified Google is the best description for it's current capabilities until it can show me the ability to solve problems like that.

The funny thing is, I actually want it to get better at solving these things so that I can delegate work to it, get correct answers quickly and check my understanding.

interesting use case

i read a reddit post recently that basically said to have chatgpt write a python script to calculate X and Y than instruct it to run the script against the problem. i haven't tried it but i wonder if that might be of some use to you.

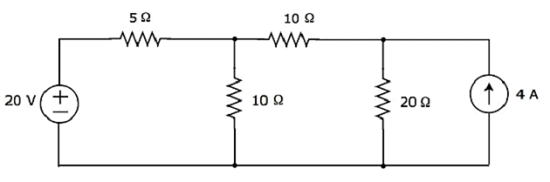

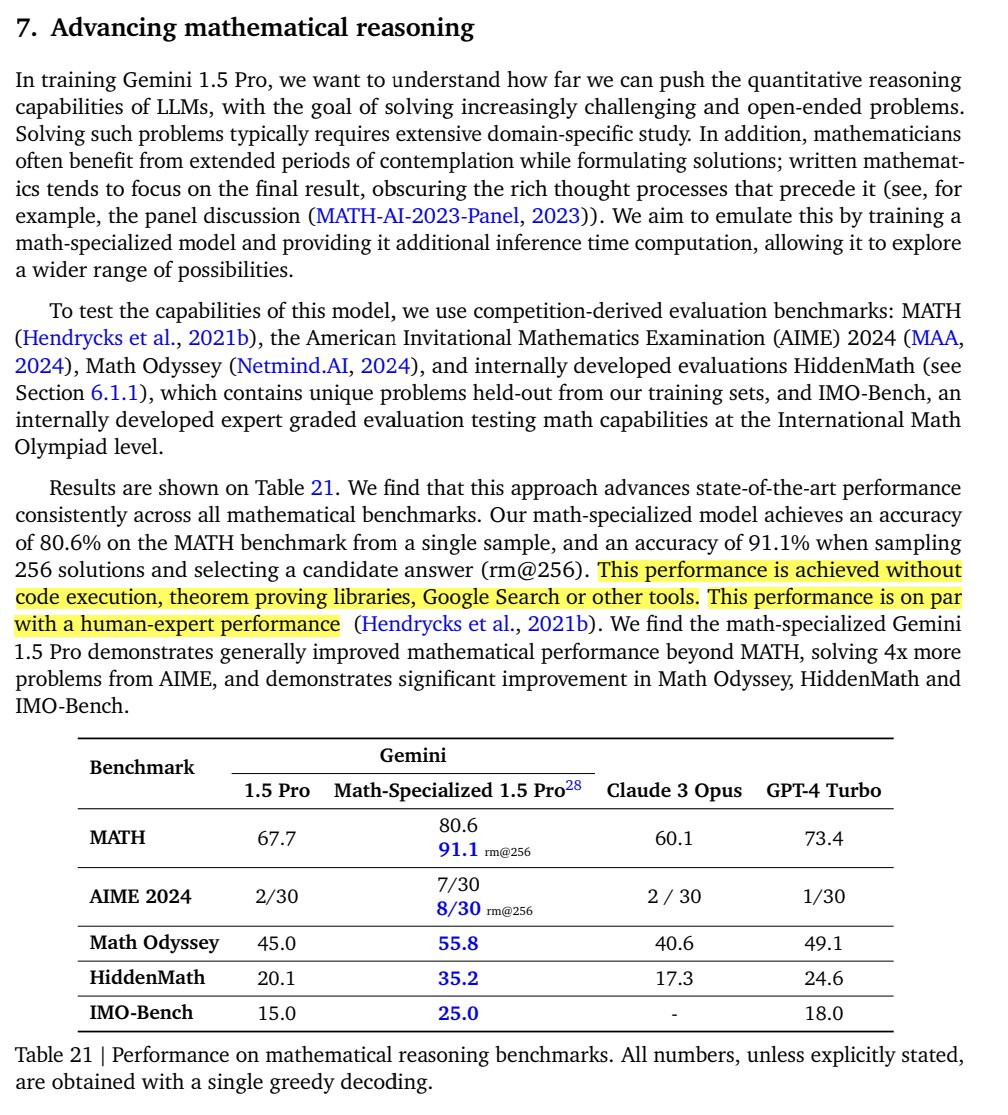

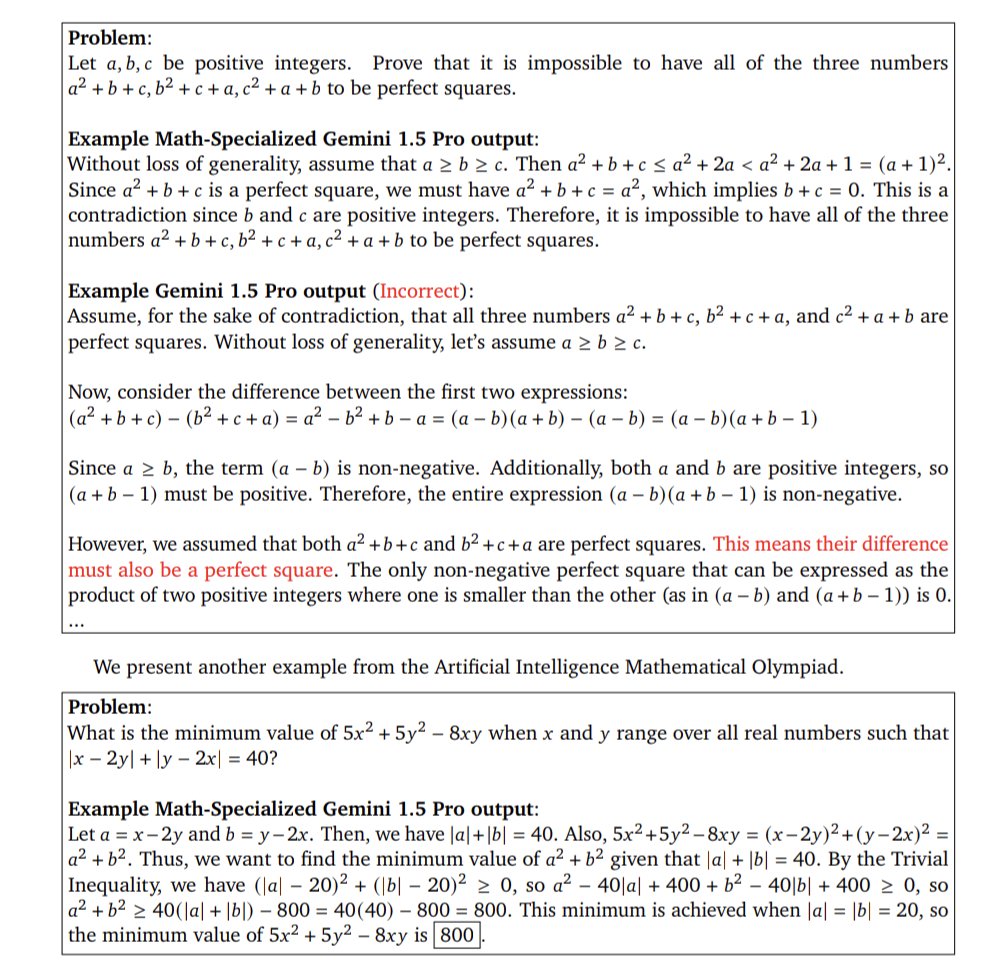

google recently set a new high standard in math.

1/1

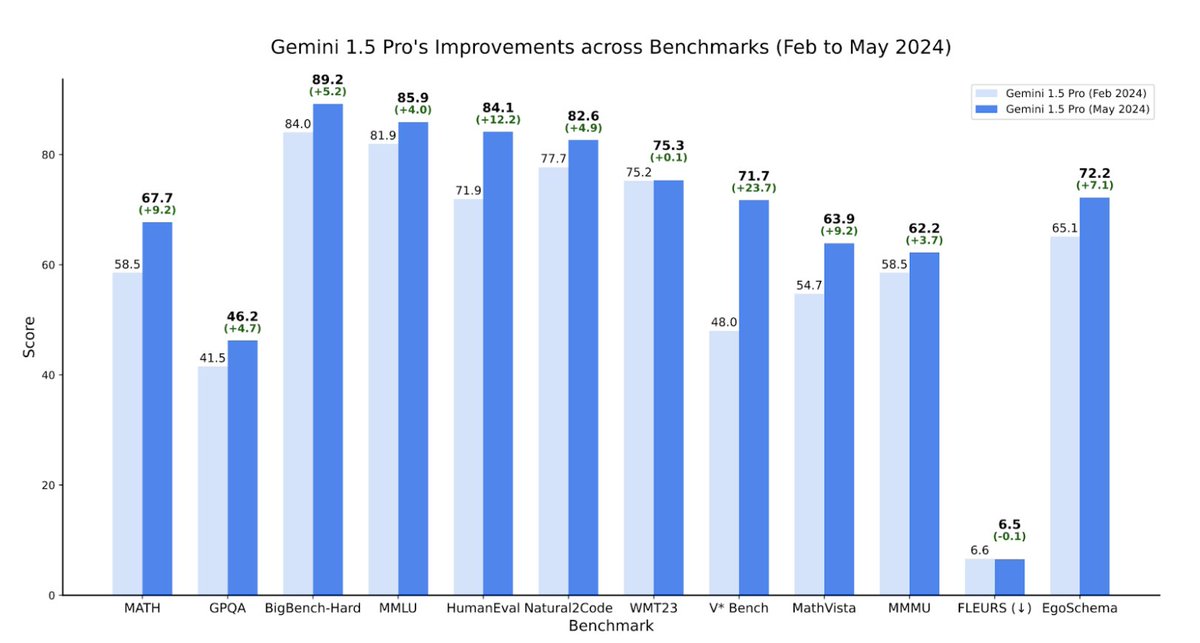

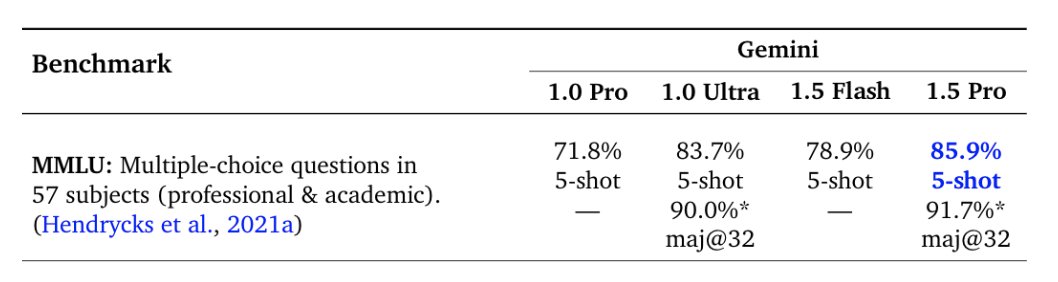

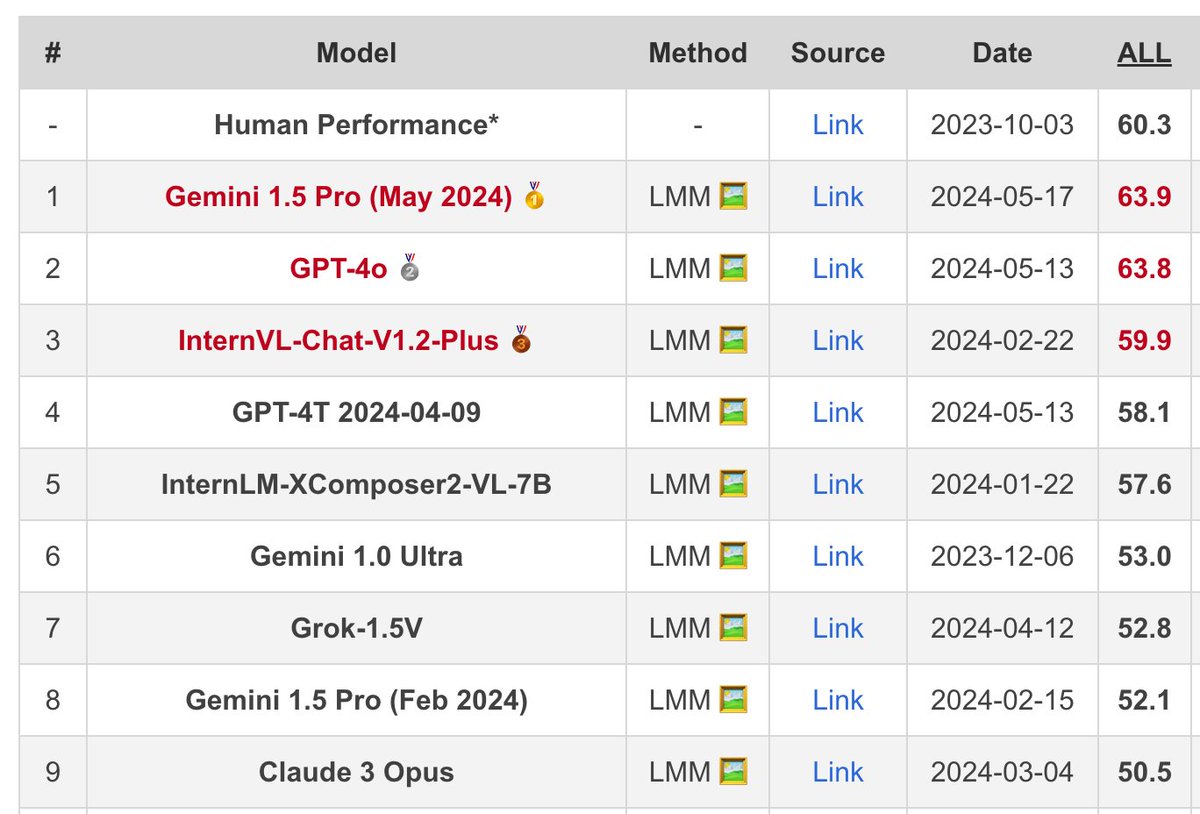

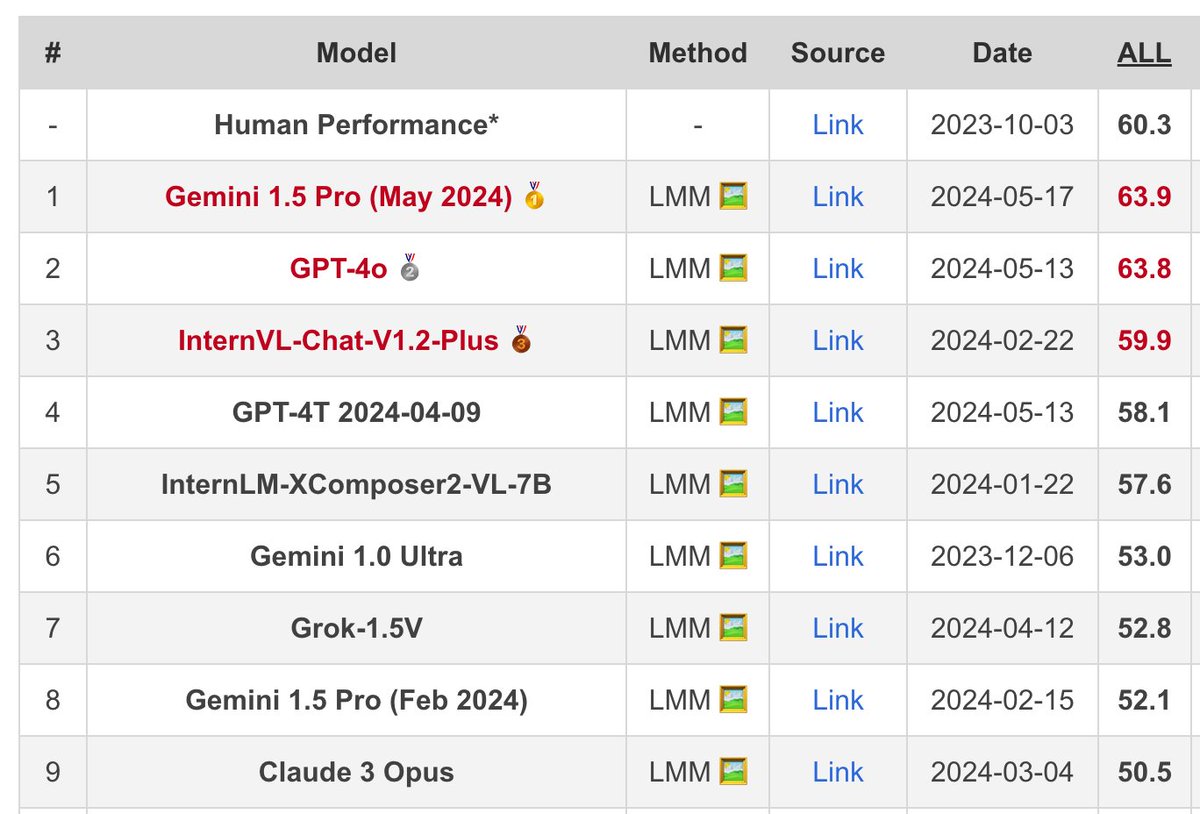

Updated Gemini 1.5 Pro report: MATH benchmark for specialized version now at 91.1%, SOTA 3 years ago was 6.9%, overall a lot of progress from February to May in all benchmarks

To post tweets in this format, more info here: https://www.thecoli.com/threads/tips-and-tricks-for-posting-the-coli-megathread.984734/post-52211196

Updated Gemini 1.5 Pro report: MATH benchmark for specialized version now at 91.1%, SOTA 3 years ago was 6.9%, overall a lot of progress from February to May in all benchmarks

To post tweets in this format, more info here: https://www.thecoli.com/threads/tips-and-tricks-for-posting-the-coli-megathread.984734/post-52211196

1/7

A mathematics-specialized version of Gemini 1.5 Pro achieves some extremely impressive scores in the updated technical report.

2/7

From the report; 'Currently the math-specialized model is only being explored for Google internal research use cases; we hope to bring these stronger math capabilities into our deployed models soon.'

3/7

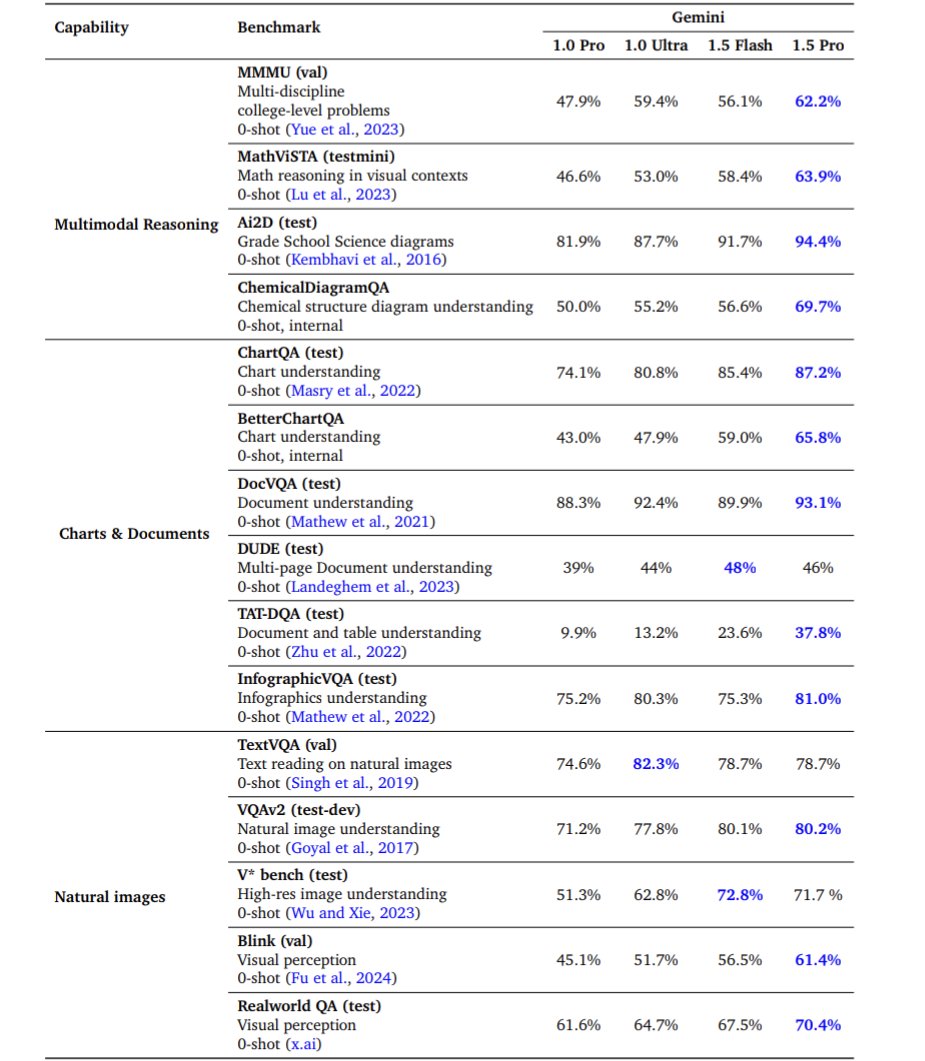

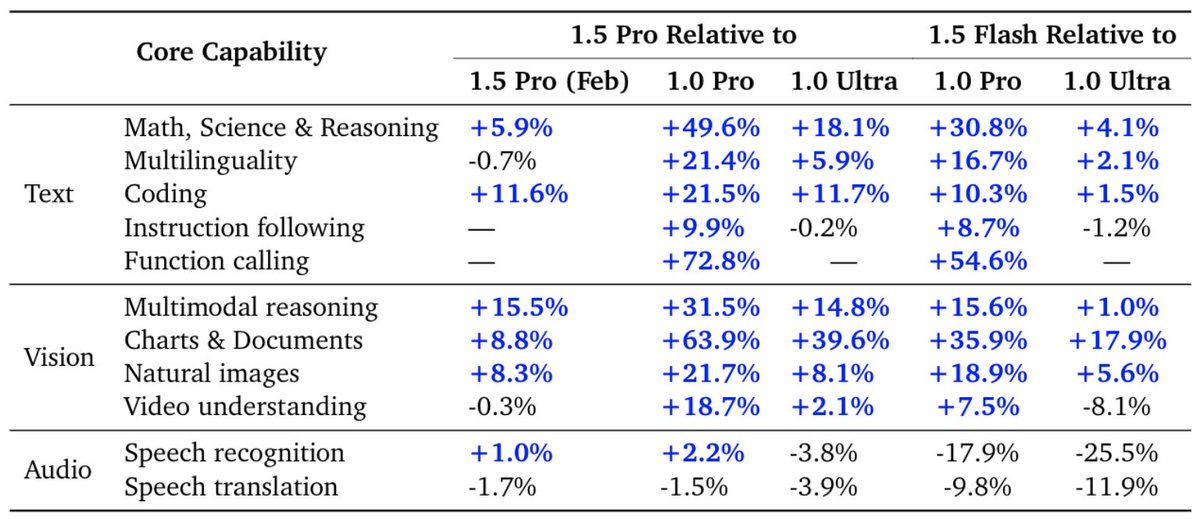

New benchmarks, including Flash.

4/7

Google is doing something very interesting by building specialized versions of its frontier models for math, healthcare, and education (so far). The benchmarks on all of these are pretty impressive, and it seems to be beyond what can be done with traditional fine tuning alone. twitter.com/jeffdean/statu…

5/7

1.5 Pro is now stronger than 1.0 Ultra.

6/7

4o only got to enjoy the crown for 4 days.

7/7

They put Av_Human at the top of the chart there visually to make people feel better. The average human is now in third place.

To post tweets in this format, more info here: https://www.thecoli.com/threads/tips-and-tricks-for-posting-the-coli-megathread.984734/post-52211196

A mathematics-specialized version of Gemini 1.5 Pro achieves some extremely impressive scores in the updated technical report.

2/7

From the report; 'Currently the math-specialized model is only being explored for Google internal research use cases; we hope to bring these stronger math capabilities into our deployed models soon.'

3/7

New benchmarks, including Flash.

4/7

Google is doing something very interesting by building specialized versions of its frontier models for math, healthcare, and education (so far). The benchmarks on all of these are pretty impressive, and it seems to be beyond what can be done with traditional fine tuning alone. twitter.com/jeffdean/statu…

5/7

1.5 Pro is now stronger than 1.0 Ultra.

6/7

4o only got to enjoy the crown for 4 days.

7/7

They put Av_Human at the top of the chart there visually to make people feel better. The average human is now in third place.

To post tweets in this format, more info here: https://www.thecoli.com/threads/tips-and-tricks-for-posting-the-coli-megathread.984734/post-52211196

IIVI

Superstar

Kind of like what I mentioned I don't think Math is it's main problem. I can throw Calculus, Linear Algebra, Differential Equations at it and I think it'd do fine for the most part because it's basically rote. If X, do Y.interesting use case

i read a reddit post recently that basically said to have chatgpt write a python script to calculate X and Y than instruct it to run the script against the problem. i haven't tried it but i wonder if that might be of some use to you.

google recently set a new high standard in math.

Circuits though it's absolute trash at solving. Can't trust it at all. I've looked at multiple libraries, but nothing. Maybe the closest is the Flux app and that's still wacker than Chegg.

The Engineering subreddits knows how bad it is as well. Probably as bad in most Engineering courses to be honest.

Last edited: